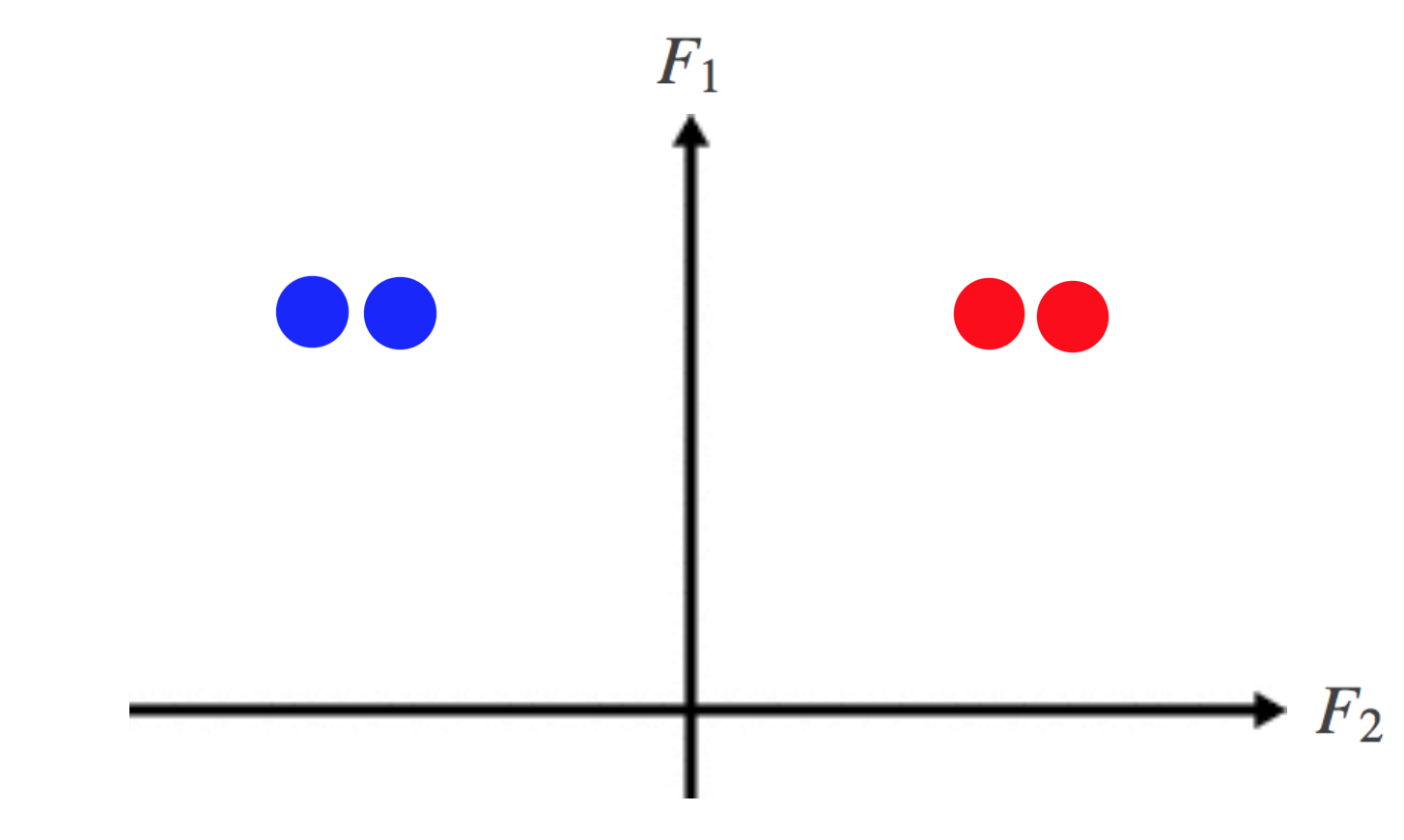

The intuitive picture I have in mind, is that when one looks at the superimposed graphs of the densities of the two measures (pretending that they have densities) is that the relative entropy measures how much they differ in a vertical sense only, but the Wasserstein metric allows for sideways nudgings of the two graphs. The relative entropy or Kullback-Leibler divergence is a quantity that has been developed within the context of information theory for measuring similarity between two pdfs. All these quantities are closely related and share a number of simple properties. Mutual information is a special case of a more general quantity called relative entropy, which is a measure of the distance between two probability distributions. By proper choice of $\epsilon$, we can make the Wasserstein distance big but the relative entropy small. Entropy then becomes the self-information of a random variable. The Wasserstein distance is something like $O(N\epsilon)$ (because we have to transfer like $\epsilon$ of the mass over distance $N/2$, but the relative entropy is something like $O(\epsilon)$ because $\log f(x)/g(x) = O(\epsilon)$.

Best nodes are defined as relative reduction in impurity. of I101 and W112 (relative to the parent H11) that has occurred on binding. The focus of this paper is to illustrate important philosophies on inversion and the similarly and differences between Bayesian and minimum relative entropy.

But for $h\ne0$ the relative entropy is infinitely large, as the two measures are mutually singular.įor an example the other way around, let $f(x)$ be the density of a $U$ rv, and let $$g(x)=(1-\epsilon)f(x)$$ for $x\in$ and $$g(x)=(1+\epsilon)f(x)$$ otherwise. for the Gini impurity and logloss and entropy both for the Shannon information gain. Correlation between the binding affinity and the conformational entropy of. The Wasserstein distance between these is $O(h)$, which is small if $h$ is small. For an example, look at the point masses $\delta_0$ and $\delta_h$ supported at $0$ and $h$, respectively.